150+

Minutes

Saved each day waiting for reports.

3200+

Lines

Of code documentation generated.

0

Incidents

Caused during the whole project.

The Challenge

The shift report which would run every morning to aggregate the raw data of the past production day and create the automated reporting has been running rather long lately, and at some point it even crashed and was not able to complete in time for the target meeting.

Background of the Project

The legacy software has been in use for more than a decade to collect the raw data from the stations and would then aggregate the data for the whole previous day and generate the shifts reports. The software was heavily customized by a former employee of the client, these customization were not documented.

These reports are essential for the morning round on the shop floor and also for the KPIs of the teams. The software was running on production system with no test environment or versioning of this customized code available.

These factors made it a rather high risk project.

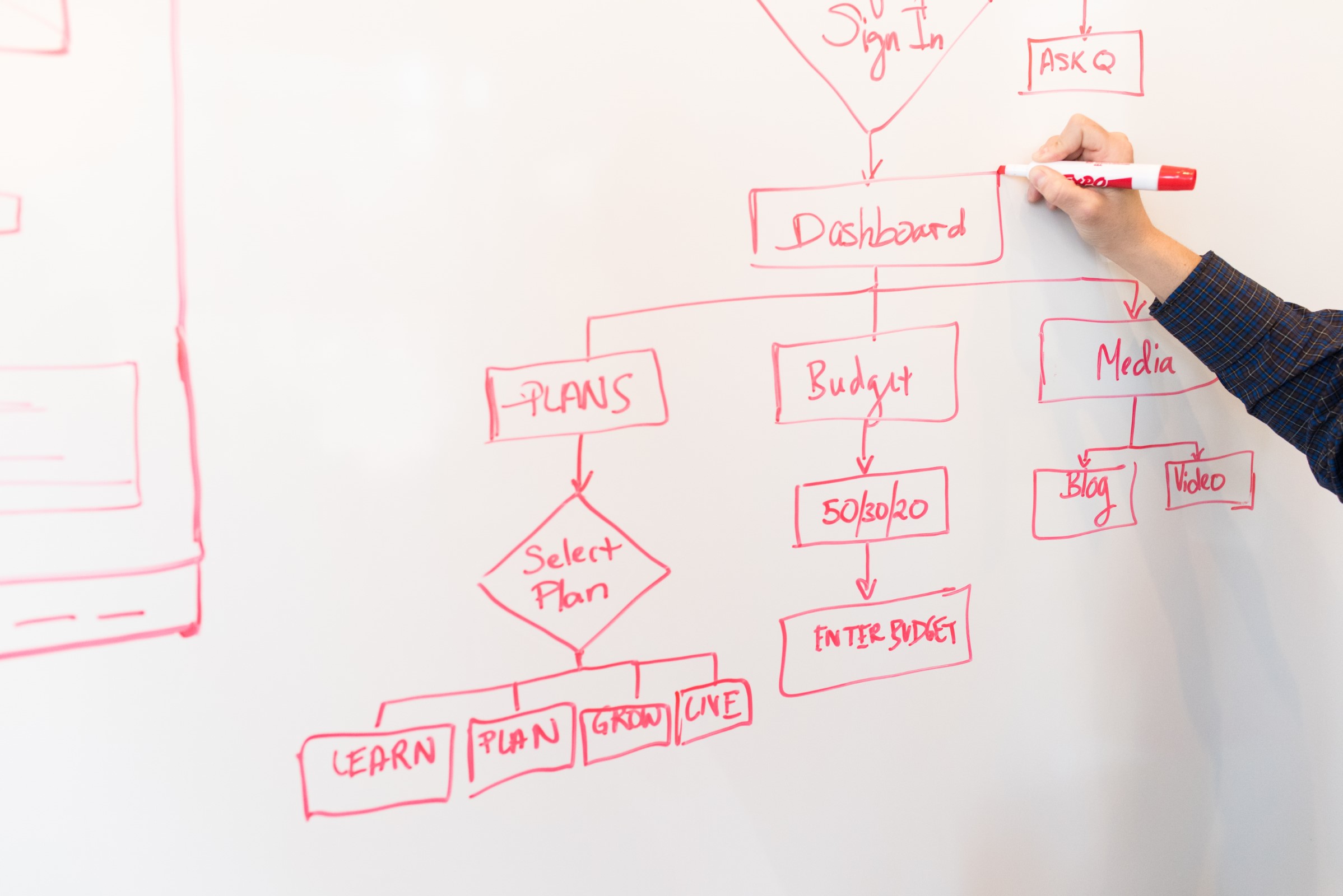

The Process

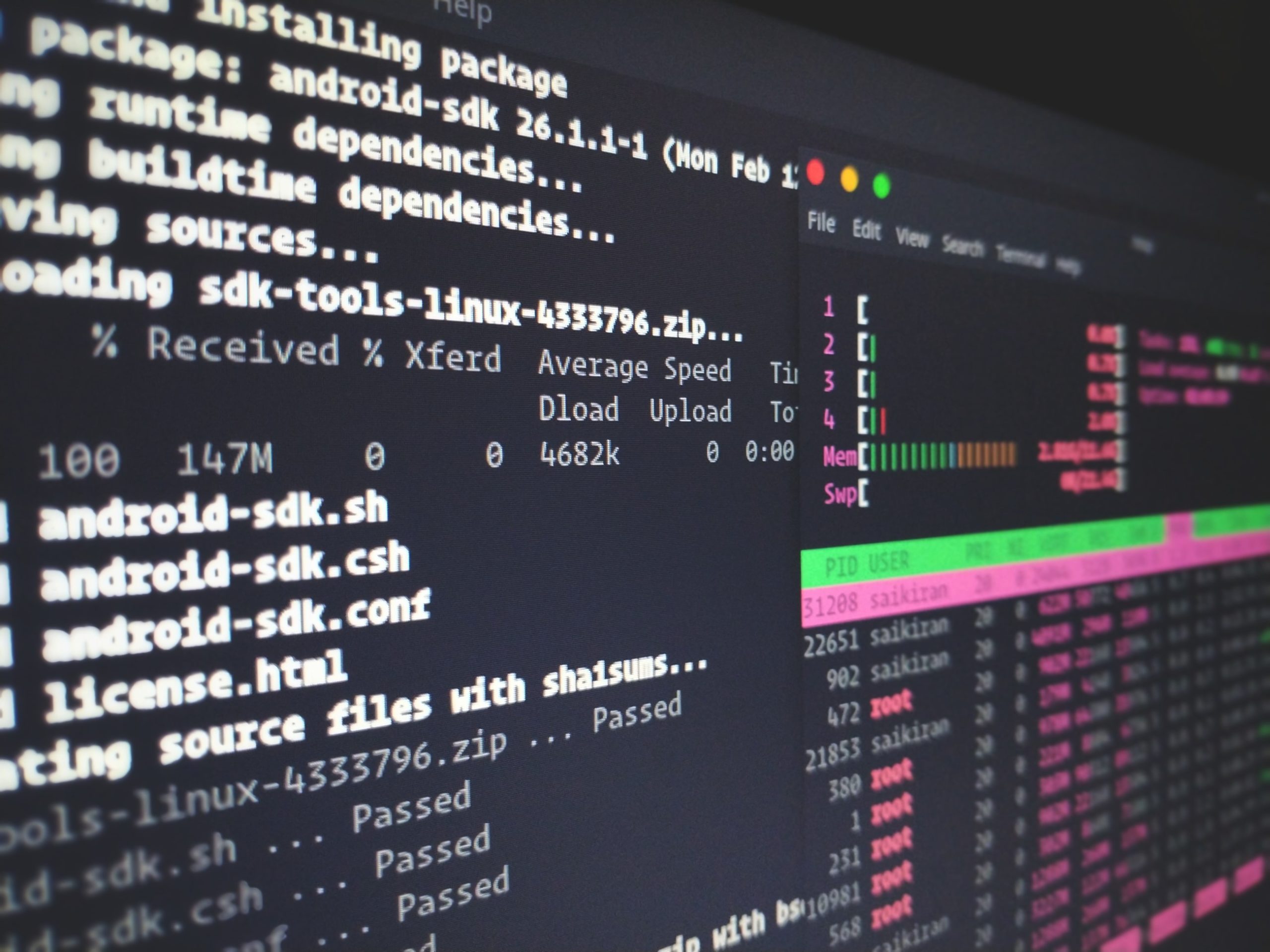

The project started with the analysis of the application and the data structure. Getting to know what was processed by the application first, then we started reverse engineering the application using all of the available information, such as source code, input data, logs and generated output. The reverse engineering had to be done on the productive system, while the production was ongoing.

The reverse engineering of the application was the first step towards code refactoring.

After successfully breaking down the customized application’s components and understanding it’s logic, we were able to document the logic of the data aggregation and processing. From there we initiated the code refactoring, which was started by renaming all the mysterious variables “var1”, “var2”, …, “varN” as well as the cryptic methods and classes.

Finally we were able to optimize the data aggregation and processing, reducing the data aggregation and report generation time from over 3 hours to less than 30 minutes! We were also able to rerun the code for the whole past 3 weeks to fill missing data caused by the crashes without impacting production.

Conclusion

The code refactoring was the best option to fix the errors, optimize the application’s runtime without breaking the existing functionality or budget. The reverse engineering of the application was necessary anyway to clarify logic and dependencies as the code itself was the only available documentation. Cleaning up the code and adding the documentation enabled also the inclusion of the application into monitoring and support.

Improved error handling and application logging.

The added error handling improved fault tolerance greatly, while the extended logging enabled the monitoring of the application in real time which enabled the operations team to react as soon as something went wrong and provided them with useful error messages for troubleshooting.

Production was never interrupted.

All of this happened on the production system without any noticeable impact on the daily operations, which were time sensitive given the high throughput of the system.